Image generated by Google Gemini

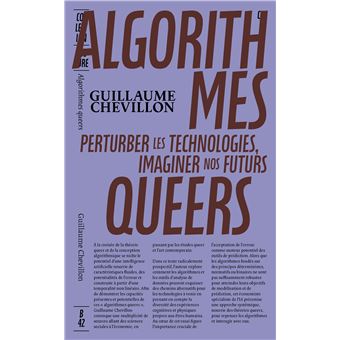

Artificial intelligence is no longer just a futuristic dream: it’s an invisible motor transforming our writing, our decision-making, and even increasingly, our deepest thoughts. Guillaume Chevillon, ESSEC professor and director of the ESSEC Metalab Institute for Artificial Intelligence, Data and Society, recently published a book, “Algorithmes queers” (Queer Algorithms), in which he analyzes two linked questions: how can we control and improve the short-term effects of algorithms, and how can we control, or even shape, their long-term effects?

Dr. Chevillon is also an econometrician, meaning he conducts research at the intersection of social sciences and data science. He notes that these systems are often seen as a positivist, apolitical discipline thanks to their reliance on mathematics, but they actually use human constructs that cannot be truly neutral in their technical and economic choices. An algorithm’s success depends on its ability to optimize precise statistical criteria for an immediate impact – without considering the long term consequences and their radical uncertainty. Who could indeed have imagined that Facebook, originally a college alumni networking site, could not only play an important role in the ‘Color Revolutions’ in post-Soviet states and during the Arab Spring, but also the spread of disinformation and rise of polarization in western democracies? While AI has many useful applications, it also influences our preferences and can shape what we collectively see as normal or desirable.

What can economic history teach us about the failure of rigid optimization?

The pursuit of short-term predictive accuracy echoes the historical limitations of modeling in macroeconomics and finance over the past century. When a Central Bank produces and publishes forecasts of inflation, it alters individual expectations and therefore the future it seeks to predict. This distinctive feature of the social sciences, where observation influences the subject of study, sets them apart from other scientific disciplines. The Lucas critique, formalized in 1976 by the future Nobel laureate Robert Lucas, illustrates this paradox: it underscores that no algorithm designed to influence human behavior can guarantee its intended outcome, since its implementation inherently changes the dynamics it was meant to govern. The introduction of a new control tool reshapes the behavioral rules of those it aims to regulate. The subprime financial crisis is one example of an algorithm meant to mitigate risk and instead becoming toxic as it lacked adaptability and failed to account for human ingenuity.

This means that we must have flexible and resilient systems, a form of algorithmic ‘plasticity’. Dr. Chevillon believes that the work of researchers who developed queer studies and theories offers a framework that can accompany the conception of algorithms and of AI, how we interact with them, and our predictions for the future. The term “queer” was originally a pejorative term for the LGBTQIA+ community, but has now been reappropriated in a positive way and launched a field of practices and reflections rooted in minority experiences. For sociologists Inès and Philippe Liotard [1], “queering means to positively transform, deconstruct, change, and question the bases of knowledge and norms to produce critical, living, and open ideas.”

Dr. Chevillon draws on themes central to contemporary social engineering and alternative approaches: the power to predict and act, controlling narrative and thus data, our ability to defy linear, deterministic paths, our zest for experimentation, our desire for freedom, and solidarity. A queer approach to algorithms, meaning a methodology that prioritizes open “possibilities, gaps and overlaps” [2] , could make systems more robust. The user of an algorithm, for example a manager, should search for antifragility [3]rather than rigid precision by accepting errors and incertitude as key elements in decision-making in order to avoid building a structural failure that may reveal itself at a later stage.

The geopolitics of algorithms and new power dynamics

A significant share of economic power today is shifting toward those who control the means of prediction: encompassing data, computational infrastructure, technical expertise, and energy.[4] These actors do more than merely forecast what might happen; they actively shape their environment to ensure their predictions come true. This dynamic is reinforced by oligopolistic conditions, which naturally arise from the increasing returns to scale in data and AI. This creates a cycle, where access to more data improves algorithm training and service quality, attracting more users and thus generating even more training data.

This process, which relies on the network effect between users, can lead to the enshittification phenomenon as described by essayist Cory Doctorow [5], wherein the quality of a service is progressively reduced by providers draining more and more profit from users – who have become hooked on it. This means it is essential to identify the negative externalities of AI usage, like social or mental pollution created by social network polarization, inequalities and the reinforcement of preexisting social hierarchies, but mostly to avoid adopting a deterministic mindset that attributes all our successes to our choices and talent alone. This indeed generates a form of “survivor bias”, inaccurately attributing success to foresight rather than simple statistical luck.

How can we adopt reasonable, ethical resource management?

The environmental impact of AI is a major strategic challenge, since stocking information accurately and permanently consumes a massive amount of energy. Our brains, on the other hand, accept gradual loss and degradation of information, which consumes far less energy! Tomorrow’s decision-makers could turn this limitation into an advantage by adopting a new stance to data management and introducing the right to forget and new forms of “machine unlearning” [6] to safeguard the environment and system robustness.

Take the recruitment sector: by adopting a more humane philosophy, we can move away from using normative typologies that reduce a human being to an abstract statistical profile. By refusing to rely on correlations that are all too often misleading, a recruiter can explore the ‘liminal’ zones (those that are situated between normalized categories) and unique trajectories, which are true drivers of creativity. We should always seek to increase everybody’s agency, their capacity for action, and understand our connection to the world through how others’ experiences make us feel. In what Bruno Perreau calls “co-appearance” [7], we open up new horizons by walking a mile in someone else’s shoes.

In Dr. Chevillon’s new book, he also explores the very conception of algorithms and AI to uncover ways to make them more secure and robust. He shows that their success can often be attributed to these queer characteristics: less deterministic and hierarchical, more exploratory, cooperative, and often to making mistakes. These successes outline approaches that algorithm developers could use to strengthen their work. This would also allow them to reduce the impact of political and social barriers, but we must avoid limiting future exploration to the past already encoded in the available data.

What role does collective action play?

We also must identify how we react to AI, when we don’t own or develop the algorithms but seek to maintain control over our future and that of our society. Dr. Chevillon identified behaviors and practices to interact with algorithms to shape them and keep control. For example, refusing to cooperate or not reacting, which lets us take back the reins when faced with their stimuli.

The rise of AI forces us to reorganize ourselves, as a society and also within firms. Work is changing, and we must work together to influence algorithmic choices, building networks that avoid direct confrontation while refusing blind submission. Embracing certain forms of error allows us to escape AI’s snares. To achieve this, we can leverage tactics like non-response (the “playing dead” strategy used by many animals), deliberate forgetting, or repurposing tools to disrupt prescriptive systems. By introducing slack in technology’s gears, we can restore humanity’s capacity for extrapolation and invention.

Committing to this technological transformation can also be sustained by a form of joy: the intersection of influencing and being influenced, according to Spinoza. Joy will not erase the challenges, but does provide the necessary energy to imagine a more just future where technology supports our desires without predetermining them. By facing serious topics with subversive lightness, we can build a path where algorithms serve our autonomy and diversity.

References

1.Inès et Philippe Liotard, Queer, Paris, Anamosa, 2025, p. 32-33.

2. Eve Kosofsky Sedgwick, "Queer and Now" , in Eve Kosofsky Sedgwick (dir.),Tendencies, Durham, Duke University Press, 1993, p. 8.

3. Nassim Nicholas Taleb, Antifragile: Things That Gain from Disorder (New York: Random House, 2012).

4. See: Maximilian Kasy, The Means of Prediction (Chicago, Chicago University Press, 2025, p. 5).

5. Cory Doctorow, "The 'Enshittification' of Tiktok", Wired Ideas, January 23 2023, [online] : https://www.wired.com/story/tiktok-platforms-cory-doctorow/

6. For recent examples, cf. Sijia Liu et al., Rethinking Machine Unlearning for Large Language Models, Nature Machine Intelligence, vol. 7, 2025, p. 181-194.

7. Bruno Perreau, Sphères d’injustice. Pour un universalisme minoritaire, Paris, La Découverte, 2023, p. 24, 193-194. (also published as Spheres of Injustice, The Ethical Promise of Minority Presence, MIT Press, 2025)